EST. 2014

Monetization

Platform

WMG International (known as adWMG) provides pioneering ad tech solutions for advertisers and niche publishers worldwide. Tailored, tech-driven advertising to ace personalized goals.

For Direct

Publishers & SSPs

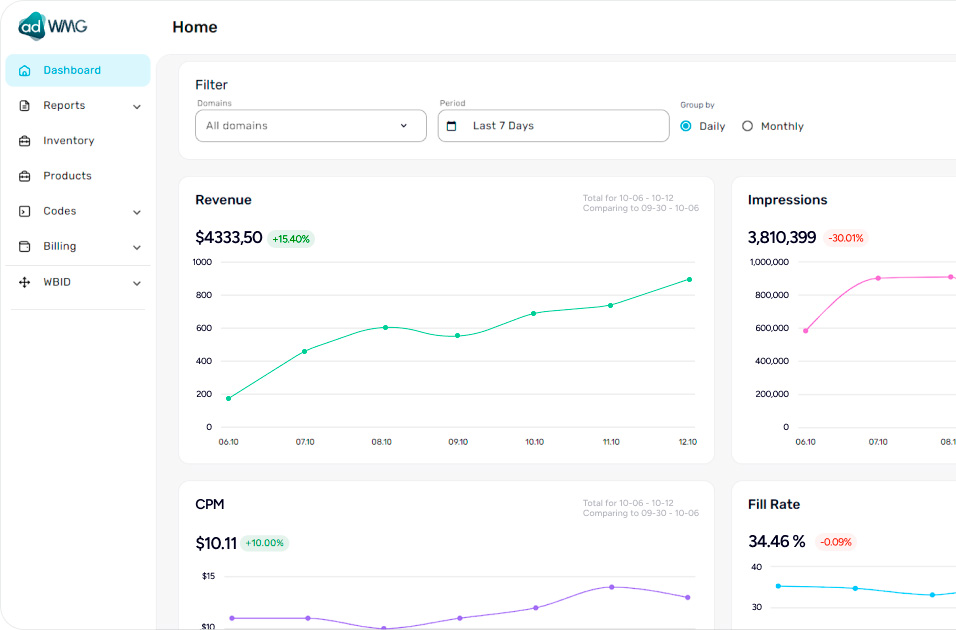

Our sophisticated Analytic Report feature can gather, generate, and analyze large amounts of data in real time. Protecting your information on our secure servers, WMG allows you to use numerous filters and metrics that allow you to build and customize effective monetization strategies.

They trust us:

JavaScript

Tag

Tag

openRTB

endpoint

endpoint

VAST

Prebid

Client Side

Client Side

10 Billion

Ad impressions monthly

30

High-quality SSPs

9 000

Direct Websites

10 000

Mobile applications

Simplifying complicated technologies, WMG

creates products for

programmatic monetization

creates products for

programmatic monetization

About us in the Media

WMG International ecosystem

Formats

Product

Device

Formats

Product

Integration

header bidding

header biddingwrapper

adWMG HB

adWMG HBadapter

header bidding

header biddinganalytics

wPlayer video

wPlayer videosolution

Mobile devices

Mobile devices Desktop

Desktop CTV

CTV

Technical core

Audio

Audio

DSP

Direct campaigns

TAM

Header bidding

Open Bidding

SSP

Using the most contemporary technological stack, WMG International creates effective technical solutions for publishers worldwide